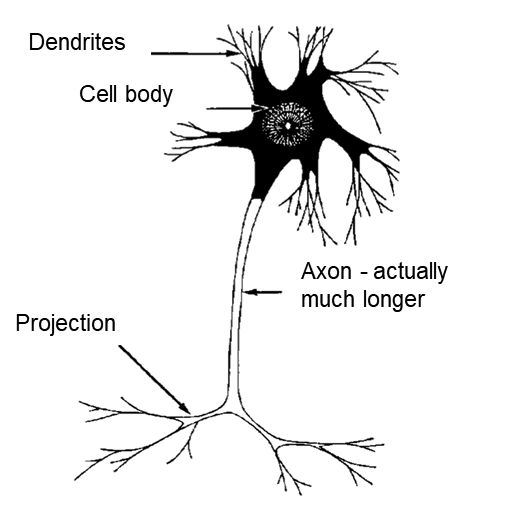

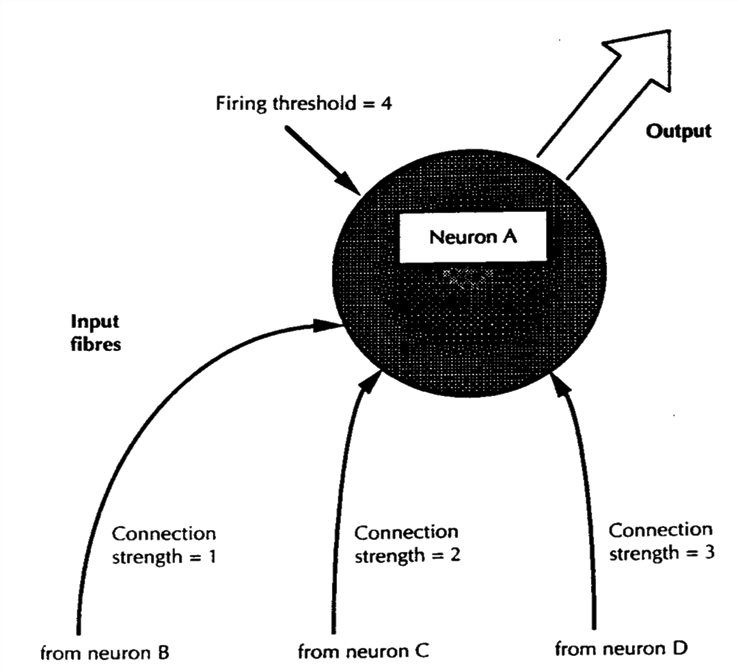

A neuron is a cell whose body receives electrical input from dendrites and projects electric pulses down an axon to others, as a tree sends water from roots to leaves (Figure 6.10). Dendrites have to pass an input threshold to fire a neuron so input from neurons B and D in Figure 6.11 fire neuron A, but B and C don’t, as they don’t reach its threshold of four. Neurons selectively pass on electrical impulses.

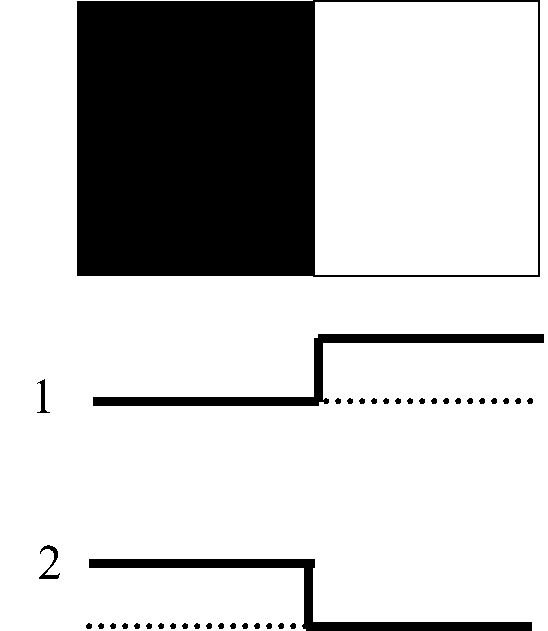

In embryos, nerves grow out from the brain to form the retina, so light entering the eye touches the brain directly. If the retina was a photoelectric cell, it would pass on pixel data if say 1 is black and 0 is white. It works equally well if 0 is black and 1 is white, as long as the definition is absolute, but a designer would have to set that.

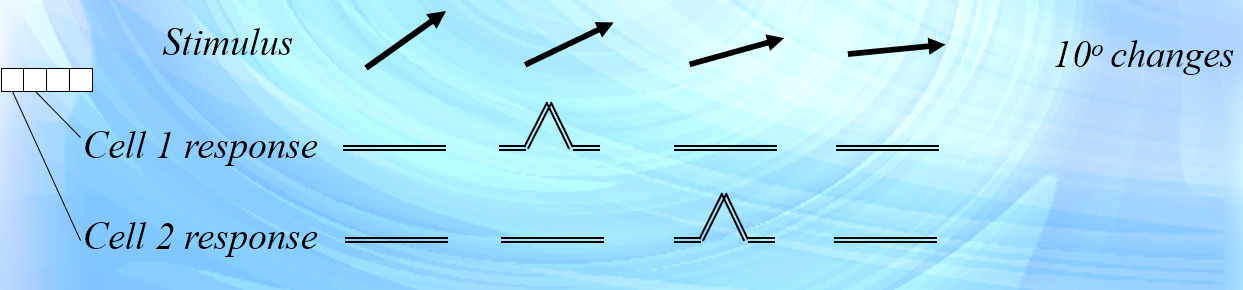

Brains had no designer so evolution took both options, as it always does. One type of retinal cell responds to light above the background level and another type responds to light below that level. In Figure 6.12, cell 1 responds to white and cell 2 to black. Instead of defining data absolutely, retinal cells respond relative to background light by interacting to excite or inhibit each other to amplify the borders that later allow object shapes.

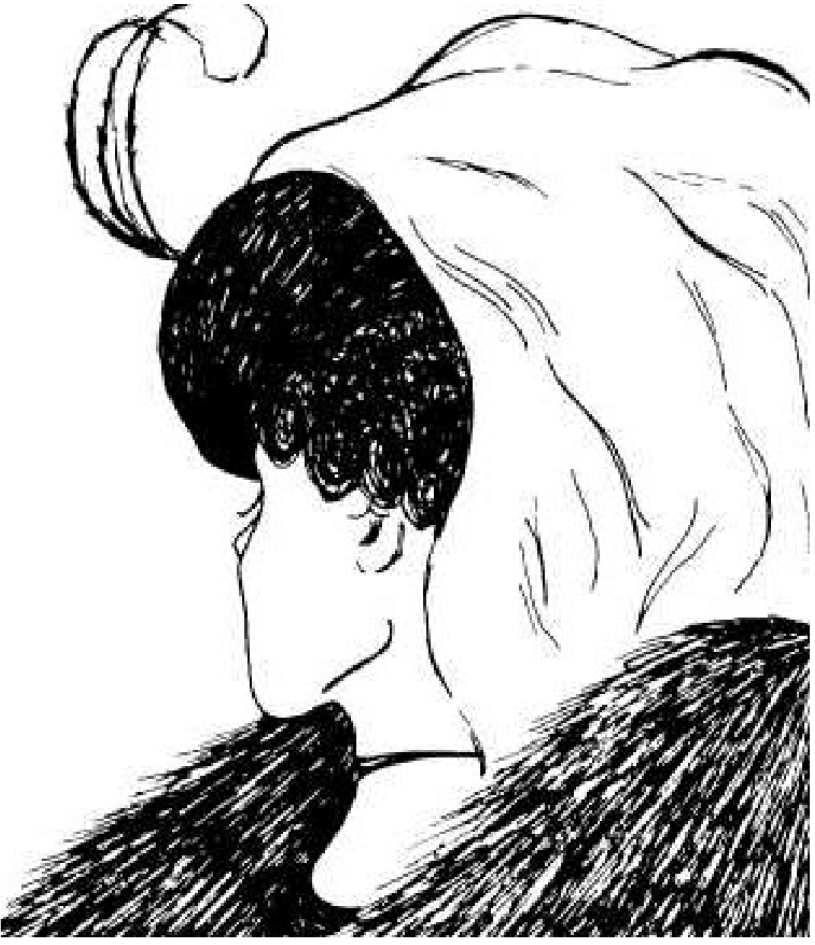

Vision identifies an object by making one side figure and the other ground. In Figure 6.13, making black the figure just gives blobs but making it background lets you read “MAIL BOX”. The brain uses figure-ground context to unravel visual data ambiguity, as one must choose the right figure-ground context to see an object.

The human cortex is a nested hierarchy that processes data in six layers labelled I to VI, as lower units feed higher ones. The first step after the nerve is a hundred or so nerves about the thickness of a hair called a microcolumn:

“… current data on the microcolumn indicate that the neurons within the microcolumn receive common inputs, have common outputs, are interconnected, and may well constitute a fundamental computational unit of the cerebral cortex …” (Cruz, 2005)

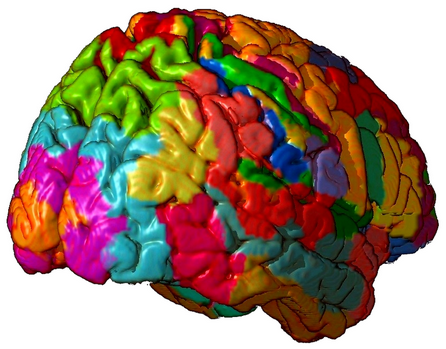

About a hundred microcolumns then form a cortico-cortical column that sends axons to nerves nearby. They then form into a macrocolumn of about a million nerves, about 3mm wide, with cortical links. Macrocolumns then form about 32 Brodmann areas (Figure 6.14) of maybe a hundred million nerves for functions like language.

.

The cortical processing layers are (Nunez, 2016) p91:

1. Microcolumns. A hundred or so nerves about .03mm wide.

2. Cortico-cortical columns. A thousand or so nerves about .3mm wide.

3. Macrocolumns. A million or so nerves about 3mm wide.

4. Brain areas. A hundred million or so nerves of various sizes

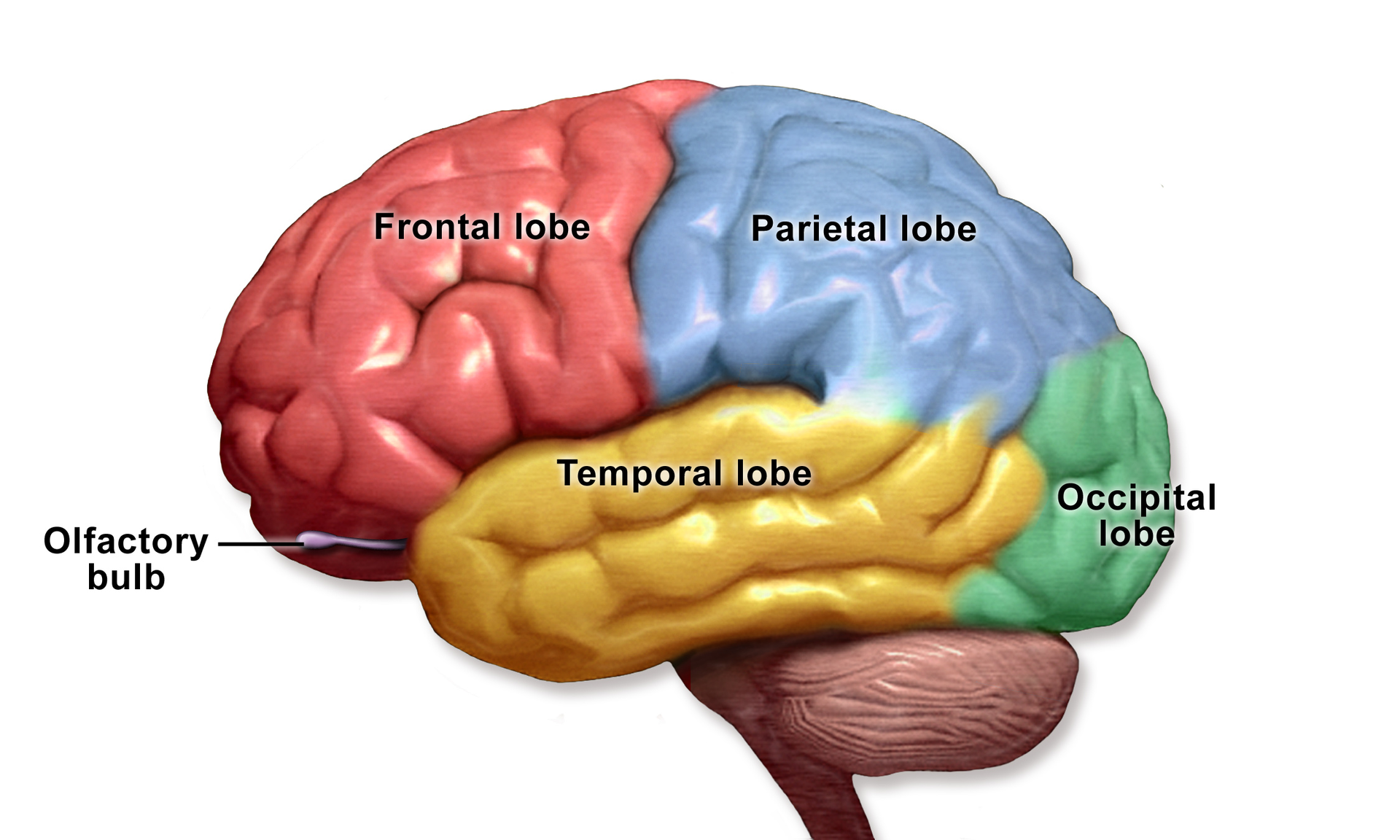

Brain areas then form four lobes about 50mm wide separated by deep fissures (Figure 6.15). The occipital lobe handles visual data, the parietal lobe handles body image and space relations, the temporal lobe handles sound and memory and the frontal lobe handles plans and intentions. It can stop other parts doing socially improper acts, so a person with frontal lobe damage may know how to behave but can’t stop inappropriate acts like touching. Four lobes together form a hemisphere that with the other is the cortical brain.

The visual hierarchy starts when the eye detects photons. This data is then subject to layer upon layer of processing to detect relevant features. For example, some nerves in layer IV fire for different line angles (Figure 6.16) and others for other features.

Scientists estimate that each eye inputs about 8.75 Megabits a second and the brain in total receives over 20 Mbps. As James said in 1892, our first impression was probably information overload:

“The baby, assailed by eyes, ears, nose, skin, and entrails at once, feels it all as one great blooming, buzzing confusion”

Computers handle information overload by compression that reduces the data in a video but keeps the key features. The brain does the same by reducing sense data to features that represent borders, shapes or objects. When a baby’s brain transforms data from millions of optic nerves to see an object is a cup, it handles the world better. The brain helps us survive by reducing sense data to key features.

Computer processing is mostly linear but brain hierarchies have bottom-up, lateral and top-down links. Sense data flows up and down the processing hierarchy as a two-way flow. Top-down paths predict, interrogate and check lower processing as higher processing “experts” check for consistency or errors (Dehaene, 2014) p139. Bottom-up paths analyze data as computers do, but lateral paths establish context and top-down links can rerun lower processing.

Is Figure 6.17 an old or young lady? If you see a young lady, can you see an old one or the reverse? To do this you must rerun your visual processing. The visual system makes a best guess, but you can ask for a redo because nerves go down as well as up. Lower processing is “out of sight and out of mind” but it can be redone by top-down control. All perception is a hypothesis of an ambiguous world.

Subconscious processing might be assumed to be primitive but the spinning ballerina illusion (Figure 6.18) suggests otherwise. Click on the link to see a ballerina spinning but the rotation is ambiguous, so you might see her spin clockwise or anti-clockwise.

Try to see her spin the other way. If you can’t, pause the video and if you see an extended leg at the front, imagine it at the back, or vice-versa. Restart the video and if she spins the other way, you just reprogrammed some complex unconscious visual processing

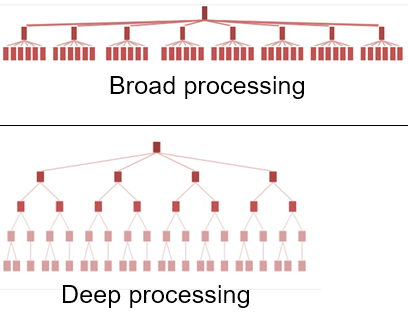

The optic nerve has about a million axons but the auditory nerve only has about 50,000, so its processing base is narrower than for vision. In Figure 6.19, the same processing resources applied to a narrow base allows deeper processing. There is a trade-off between processing breadth and depth, so if hemispheres of equal capacity specialize, the narrower base of sound can be processed deeper than the broad base of vision. The hemisphere that specializes in sound can develop language because a narrow base allows the deeper processing that language requires. One hemisphere specializes in the deep processing of language while the other favors the broad processing of spatial analysis.

.