Legitimate access control is essential to the trust needed for online social interaction. The following rules suggest legitimate universal rights that can be implemented by any social technology designer, to civilize the Internet from its current Wild West status by code not guns. Others are invited to support this effort, whether by critique, development or use.

Accountability Rule: All rights to access control entities must be allocated to actors at all times.

Software has no right to act of its own accord because it is not accountable. Just as people delivering a new TV deciding to re-organize your lounge is a liberty, so a new browser changing your browser defaults is a liberty. In the law, might is not right and “because I can” is not a reason. Modern smartphones allow other liberties, like apps that upload address book contact lists to use for their own purposes, see here. In December 2012, it was found that Carrier IQ software was recording and uploading keystrokes made, phone numbers dialed and texts sent on 140 million smartphones. An operating system with access control that followed the accountability rule would not allow this, e.g. the Android platform requires apps to ask before accessing the owner‘s data, instead of letting them just steal it.

Freedom Rule: A persona always holds all rights to itself.

In 1993, an NYU undergraduate avatar called Mr. Bungle in an online game called LamdaMOO gained the power of voodoo”, the ability to control other players. They then used it to virtually “rape” several female characters, making them respond as if they enjoyed it (Dibbell, 1993). No physical law was broken as there was no physical contact, and so no legal rape, but the LambdaMOO community was so outraged that one of the “wizards” unilaterally deleted the Mr. Bungle character. After much discussion, the system was altered to make “voodoo” an illegal power. The social requirement that one should control oneself carried over into cyberspace. Likewise when a person dies, their online persona should be deactivated as it is no longer an actor. Facebook took a while to realize that when a person dies their family doesn’t want out of control software agents sending jovial reminders to wish them a Happy Birthday, as here. The social logic again prevailed. Now one can memorialize their account so it can be viewed but not logged into or changed. I should not have to die to memorialize a site I own, but should be able to do it at any time, knowing it is irreversible.

Privacy Corollary. A persona always holds the right to display itself.

Privacy is not secrecy but the right to display oneself, so one may choose to be public. Current software tends to ignore privacy until challenged, e.g. Facebook only changed the practice of making new accounts public by default to friends only when its privacy came under scrutiny. Yet this gesture still ignores the social rule that all display is up to the person, not Facebook. How hard is it, when setting up an account, to ask whether it be displayed to:

Nobody (Default)

Everybody

Friends Only

Privacy is not hard. People have the choice to display personal data because they own themselves.

Containment Rule: Every entity is dependent upon a parent space, up to the system space.

That everything exists in a parent space allows online spaces within spaces. For example, suppose Attila, a discussion forum owner, finds a post by Luke, an online contributor, to be offensive, but Luke disagrees. What can happen? More importantly, what should happen? Is Luke free to say what he wants? Can Attila simply delete the item because it is his forum? Can he edit the item to remove the offensive part? Can he he ban Luke from entering the forum? Can he remove Luke from the system? Can he alter Luke’s name on the post to “Gross-Luke” until he learns a lesson? Can Luke fix the post and resubmit it? Since such situations arise online every day, its time to set some standards. The access control rules outlined here suggest that:

1. Luke owns the “offensive” post, so Attila cannot delete or edit it.

2. Luke owns his own persona, so Attila cannot change the author name to “Gross-Luke”.

3. Attila owns the forum, so he can reject the display of Luke’s post.

4. Luke can edit his post to be reconsidered for display by Attila.

5. Attila can ban Luke from from his forum.

7. Attila can ask the administrator to remove Luke and all his posts.

6. Attila can moderate Luke’s further posts.

Balancing legitimate rights allows a better interaction. If Attila just deleted the post, Luke might assume a system error and resubmit it, unaware it had been rejected. If Attila edits the post, others may lose confidence in the forum, even more so if Attila changes Luke’s name, i.e. personally attacks him. But Attila can refuse to display Luke’s post, which may warn others. If Luke sees his post rejected for display, he can edit it and ask again to display in Attila’s space. He also knows that to submit more such posts risks the removal from the system entirely. The social balance is that if Attila controls his forum too much it will “die”, while if Luke is a troll he will be excluded.

What if Luke’s “offensive” post is under a higher board run by Genghis who does not find the post offensive? If both Attila and Genghis could take over Luke’s post by editing it, one could get an “edit war” between them. Luke could appeal to Genghis to “depose” Attila, to take back his delegated control and give it to a more tolerant person. Or even to refuse to display Attila’s board entirely. While the code answer to social issues is “whatever you want”, the social answer is legitimate rights that increase trust.

Offspring Rule. The owners of any ancestor spaces have the right to view any offspring created.

One can create a private video on YouTube that no-one else can see but that does not include YouTube administrators. Likewise no space owner can ban the system administrator, nor can the owner of a discussion thread ban the owner of the forum in which it is contained. In the above example, Attila cannot ban Genghis from looking at Luke’s post.

Communication Rule: Every communication act requires prior mutual consent.

When systems like email give anyone the right to send a message to anyone the result is spam, or unwelcome messages. In contrast, in Facebook, chat, Skype and Twitter, one needs prior permission to message someone. To “follow” someone is a positive permission to receive their messages. In legitimate communication, a channel must be opened by mutual consent before messages are sent, as detailed in Whitworth and Liu (2009). Giving online communicators channel control protects them against being pestered by spam from unknown people or online agents.

Public Domain Rule: The full transfer of non-personal information into the public domain is not reversible.

This rule is the basis of the current open access and open source movement. The Creative Commons licenses allow public domain donors to clarify whether what is given includes the right to edit it (No Derivatives means no editing) or sell it (Non-commercial means no selling), and denies them the right to take personal ownership (ShareAlike means not altering the public rights). The idea is that once information is made public, it can never again be private. The synergy of a global information commons that benefits all is the explicit goal.

Space Entry Rule: A space owner can unilaterally ban or accept persona entry.

The right of the owner of a space to “lock out” those who misbehave is essential, as one “troll” can in effect “kill” an online discussion. It also allows what many see as the future of online interaction, which is invitation spaces. For example, one can set up an open discussion area on a topic, then invite those who add value to an invitation only discussion space. This also addresses the problem of online “bots”, AI software that trawls discussions to add null comments that advocate some sales pitch with no relevance at all to the topic. Defenses like CAPTCHA (Completely Automated Public Turing test to tell Computers and Humans Apart) aim to be easy on humans but hard on bots, but focusing on the trolls wastes everyone’s time. Invitation spaces focus on the people who want to discuss a topic, not make money or cause chaos.

Ownership Rule: The administrator of an entity can re-allocate its rights and the owner can edit it.

Current society reduces physical conflict by social ownership (Freeden, 1991) which is something software can support and people can understand (Rose, 2000). Clarifying who owns what online reduces conflict, e.g. The Internet Corporation for Assigned Names and Numbers (ICANN) registers who owns Internet names to avoid conflict. Even more complex is the case of a landlord and a tenant, where the landlord has the title and the tenant has the use. In current society, that the landlord owns the letterbox doesnt give them the right to read a tenant’s mail. Facebook’s Cambridge Analytica problem reflects a confusion over who owns personal data, them or the people who put it there? Does being the landlord of an online space give the right to “photograph” their tenants possessions in order to direct ads at them? Facebook cant forever pretend to respect privacy while denying tenants choice over how their data is used, as people wise up. Its future success attracting tenants will depend on what sort of a landlord it wants to be. Many people feel powerless on the Internet only because software makes it so.

Creation Rule: The right to create in a space resides inherently with the space owner.

In this model, the space owner holds the right to edit it and submitting into a space is an edit of that space. For a physical equivalent, submitting to an online space is like an artist delivering a painting to a gallery that is received at the door and hung up by the gallery staff. This does not mean the artist has no rights, as they may lend their painting on the condition it is given back. Or the space may be a workshop where artists can paint as people watch. Or it may be a graffiti area where artists draw on the walls knowing it cannot be taken away. Online offers all these options, as online journals like First Monday let you submit but not take back, online galleries like Google Photos and DeviantArt let you post and take back, while most forums don’t let people edit or delete posts. An interesting case is Photobucket who in 2017 suddenly made people pay $400/year for sharing what was previously free, so thousands of forums lost pictures for their posts. The issue was not that they were now charging but that they were holding photos submitted under previous TOS hostage for a $400 ransom. Imagine a free display gallery locking your photos away until you paid their new charges! They lost business and in 2018 reduced the charge to $30 hoping to come back. Companies ignore the social level at their peril.

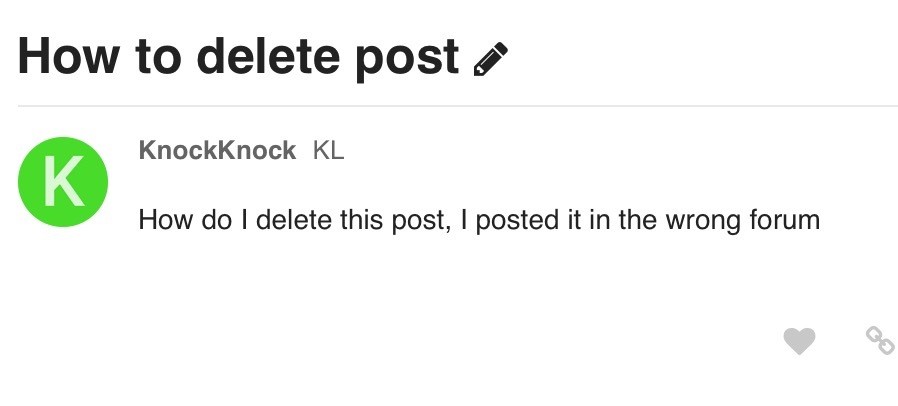

Innovation Rule: The creator of a new entity immediately gains all rights to it in their namespace.

A common complaint of forums is people posting in the wrong thread. Regular contributors report “newbies” who post a question in a new thread instead of an established one, but often it is just a genuine error the software doesnt let them fix. And the moderators who can move items often feel like parents picking up after untidy children. But why is changing the parent a restricted function? Why not let the person who posted do that? If people initially owned what they posted in their namespace, they could unsubmit and resubmit it in the right place, i.e. be responsible for their own post. Then when I make a posting mistake, as we all do, I can fix it myself. In general, delegating rights encourages responsibility.

Transparency Rule: People have the right to know in advance what rights they are giving to others.

Social transparency is the right to know when rights are being exchanged. Creative commons licenses show that giving rights needn’t be as complicated as EULAs suggest, e.g. see here for a summary of terms of service for some online apps. This model clarifies standard questions like “Do I give up administrative ownership?” and “Can I delete my post?”

An interesting case is whether software has the right to secretly record what you do online? The acceptance of CCTV cameras in Britain surprised privacy advocates, but to by choice enter a public area like a park is to implicitly consent to be viewed. Privacy is not violated if there is implied informed consent. People accept CCTV cameras in plain view because the aim is public good and the recording is known, as is how the information is used. In contrast, people object to being “spied on” without their knowledge as they want to know they are being watched. Hence the US fourth amendment only allows wiretapping if formally approved by a judge acting on behalf of the community. That one should not violate everyone‘s rights to catch some offenders was built into the US constitution by its founders to prevent random monitoring.

Welcome to Spyworld? Today, technology gives the power to store every phone call, text, e-mail, tweet and online post, every day, forever. That nobody knew that the US PRISM system was tapping the entire Internet, including its own citizens, was what Edward Snowden blew the whistle on. That what you post online can anytime come back to haunt you will have the same chilling effect on the Internet as a police state does – it stops the bad guys and everyone else as well. In this model, people grant others the right to view them, so any space monitoring should be declared before entry, so people can consent (or not) before entering the space. Monitoring per se is not wrong but secretly spying on people is. Unless social transparency is reinvented online, the fourth amendment benefit will be lost and everyone will use VPNs. If its not OK to wiretap a person without a warrant, its not OK to wiretap the Internet.

Display Rule. Displaying an entity in a space requires the consent of both its owner and the space owner.

Initially, online spaces like Youtube and Facebook considered themselves not responsible for what people posted, but this model makes clear that they are. If a space owner controls what displays in their space, they are responsible for posts against the public good because they can stop them. Hence Zuckerberg told Congress “I agree we are responsible for the content” on Facebook, despite previously claiming that it was not so, and Twitter, Google and other online giants will follow suit. Tech companies are not passive platforms on which people put content, hence a UK parliamentary committee concluded “clear legal liability” for tech companies “to act against harmful and illegal content” with failure to act resulting in criminal proceedings. How can companies that harvest people’s data for advertising then disavow control and thus responsibility for that content? That Facebook already faces £500,000 fine over the Cambridge Analytica data harvesting suggests more to come, which is why on July 26, 2018 it lost $US120 billion in the biggest drop in US stock-market history. It does not pay to go against society.

Delegation Rule. Delegating a simple right does not give the right to delegate that right.

Long ago, absolute monarchs had all rights to all things in their kingdom but those times are long gone. In 1215, Magna Carta’s habeus corpus (Latin “that you have the body”) asserted a person‘s right not to be unlawfully detained. Today, states grant people rights to their own property, including “spaces” like their own home. Such rights are not absolute but delegated. Applying these ideas to online society gives each persona freedom and the right to initially own what they create, following Locke. In this model, the system administrator, who is indeed initially the absolute ruler of their kingdom, can choose to delegate as follows:

- Create all spaces then delegate. The administrator creates all spaces then delegates them directly, to indirectly retain all control, so they can remove any space owner at will. Companies follow this model of control.

- Create first spaces then delegate. The administrator creates the first spaces then delegates them. This lets those space owners create sub-spaces that they own. The US and its states follow this model.

In this model, delegation is a trade-off between the creative productivity of free people and the absolute control of unwilling slaves. What doesn’t work, as history shows, is to delegate creative freedom then treat people as slaves by taking what they produce. That delegating does not give the right to delegate limits what is given, but does not change the underlying balance. Previously people fought wars over land but today they fight over information. If the Internet becomes a behavior modification tool on a massive scale, whether for profit or politics, then information wars will kill creativity just as physical wars do. Perhaps one day humanity will agree that everyone wins is better than everyone loses.

Allocation Rule: Allocating a right that makes a person responsible for an existing entity requires their consent.

Accepting a delegated right is a choice, so one has the right to resign a delegation at any time, i.e. give back the right to its administrator. Giving people freedom to not contribute to what they don’t like allows a talent market, as those who behave badly lose competent support. This model advocates freedom in all its forms as a fundamental Internet principle. People often underestimate the power they have on the Internet to not participate, as to participate in a thing is to enable it. Ghandi’s non-violent “rebellion” in India was essentially about not participating in an illegitimate rule. The Internet is a mirror to humanity as never before. A global community deciding to boycott an online space would empty it of citizens, making it a social failure. Social earthquakes are rare, like their physical counterpart, but when everybody agrees on something, its hard to stop them.

In conclusion, the Internet is not a new world but the old world of social interaction in a new context. Since those who do not learn from history are doomed to repeat it, it behooves us to recognize in code what is socially good. Others are invited to help develop social standards for software design, lest by ignorance the Internet experience its own dark age.